Invisible AI Is Over: Fashion Retailers Now Pay a Trust Tax for Obvious Automation

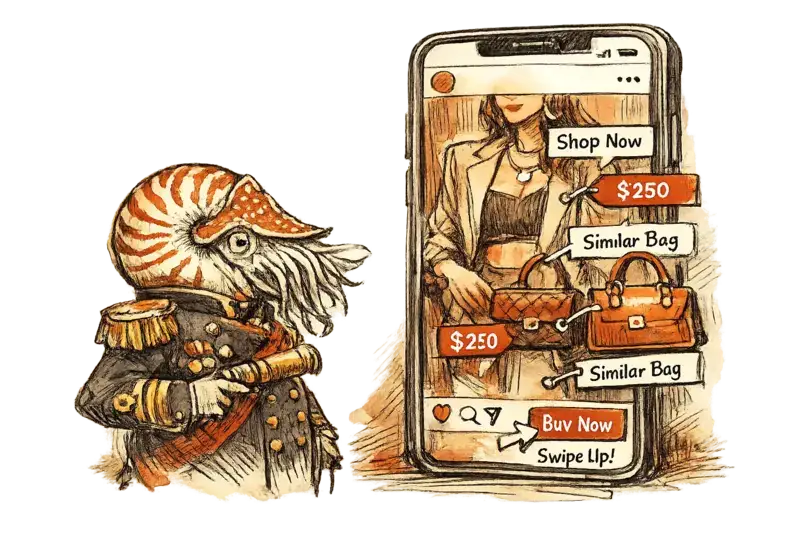

Visible AI shopping features no longer arrive as neutral convenience in fashion retail. Once automation starts attaching products to creators, recommendations to identities, and commerce to content without clear consent, retailers and platforms start spending trust to buy efficiency.

Admiral Neritus Vale

Visible AI in fashion commerce has lost its innocence. Retailers and platforms can still argue that AI shopping tools reduce friction, but the commercial problem has shifted: when automation shows up uninvited inside curation, styling, or creator-led merchandising, consumers and creators read it as an intrusion into judgment, not a gift of convenience.

That matters because fashion sells trust before it sells inventory. The current wave of AI shopping features is colliding with a market that is already suspicious of visible automation, and the evidence now points in the same direction from three angles: creators are objecting to unauthorized merchandising, consumers are signaling lower trust in brands that foreground generative AI, and brands often keep AI use away from the most visible customer-facing contexts.

The cleanest recent example came from Instagram. Reporting around Meta’s “Shop the Look” test found that creators’ posts were being turned into shopping surfaces without their prior approval, sending followers toward similar or cheaper items that the creators had not selected. Modern Retail framed the dispute around missing creator consent and weak creator value; parallel reporting from The Verge, Business Insider, and MediaPost described the same mechanism. What changed was not the existence of AI tagging. What changed was the site of intervention: Meta inserted commercial interpretation directly into creator authority.

That distinction is easy to miss if you only look at usage data. Consumers do use AI for shopping research. Adobe said traffic to U.S. retail sites from generative AI sources rose 1,200% in February 2025 versus July 2024, based on more than 1 trillion visits, and 39% of surveyed U.S. consumers had used generative AI for online shopping. This is the counter-argument retailers will make, and it is real: shoppers are already inviting AI into research, recommendations, and deal-finding.

The flaw in that argument is that voluntary AI assistance and imposed AI merchandising are different products. Adobe’s own data placed AI strongest in research and consideration, not conversion; AI-sourced retail traffic was still 9% less likely to convert than other traffic sources in that 2025 snapshot. Gartner sharpened the other half of the picture on March 16, 2026: 50% of 1,539 U.S. consumers surveyed in October 2025 said they would prefer to do business with brands that do not incorporate generative AI into consumer-facing messages, advertising, and content. The signal is not “AI fails.” The signal is narrower and more important: these surveys suggest a trust penalty for consumer-facing AI, especially when people feel they are being pushed into it.

Fashion has been trying to solve that contradiction by keeping much of its AI use quiet. A recent nss magazine analysis argued that brands are more comfortable deploying AI in operational functions such as market analysis, supply chain work, customer management, and retail optimization than in visible creative output. That tracks with the industry’s behavior. Hidden AI can still be sold internally as efficiency. Visible AI has to survive contact with the customer’s sense of authorship, taste, and fairness.

That is where many retailers are still behind the market. They continue to treat disclosure as a legal nuisance and visibility as a novelty badge. The market is moving the other way. In January, Gartner said 78% of 335 surveyed U.S. consumers considered explicit labeling of AI-generated content “very important” or “the most important factor” in maintaining trust. Adobe’s 2026 AI and Digital Trends report added that only 19% of customers agree AI agents will become their primary way of interacting with brands, and 37% said they would disengage if they expected a human and discovered they were interacting with AI instead. If visible AI has a rule now, it is simple: label it, contain it, and leave the exit unlocked.

Fashion labor is moving in the same direction. The New York Department of Labor’s guidance on the Fashion Workers Act requires separate and explicit written consent for the creation or use of a model’s digital replica, including the scope, purpose, compensation, and duration of that use. The EU AI Act’s Article 50 transparency rules will begin applying on August 2, 2026. Those are not identical to Instagram-style shopping tags, but the pattern is clear enough to brief a board on: the legal and commercial center of gravity is shifting from capability to permission.

Everyone else is still tempted by the convenience story. AI can shorten the path from inspiration to product. It can increase assisted discovery. It can route shoppers toward alternatives when exact inventory is missing. All true. Where that consensus goes wrong is in assuming the interface itself is neutral. In fashion, recommendation is authorship. Styling is authorship. Association is authorship. If a platform or retailer automates those moves without consent, it is not saving the shopper time; it is spending someone else’s credibility.

If that pattern continues, the winners in fashion retail will not be the brands with the loudest AI layer on the surface. They will be the ones disciplined enough to decide where AI should stay invisible, where it must be declared, and where it should not act at all. The trust tax is now attached to obvious automation. Retailers can pay it, or redesign the feature before the customer sends the bill.